In the previous blog post of this series, I explained the basics of the attention mechanism and how it is used in the encoder-decoder setting. This mechanism forms the basis of the transformer architecture. Before we can dive into the core of it, there are a few essential concepts we need to understand.

In this post, I will cover an overview of the transformer architecture, and introduce the key concepts of Tokenization, Embeddings, and Positional Encodings.

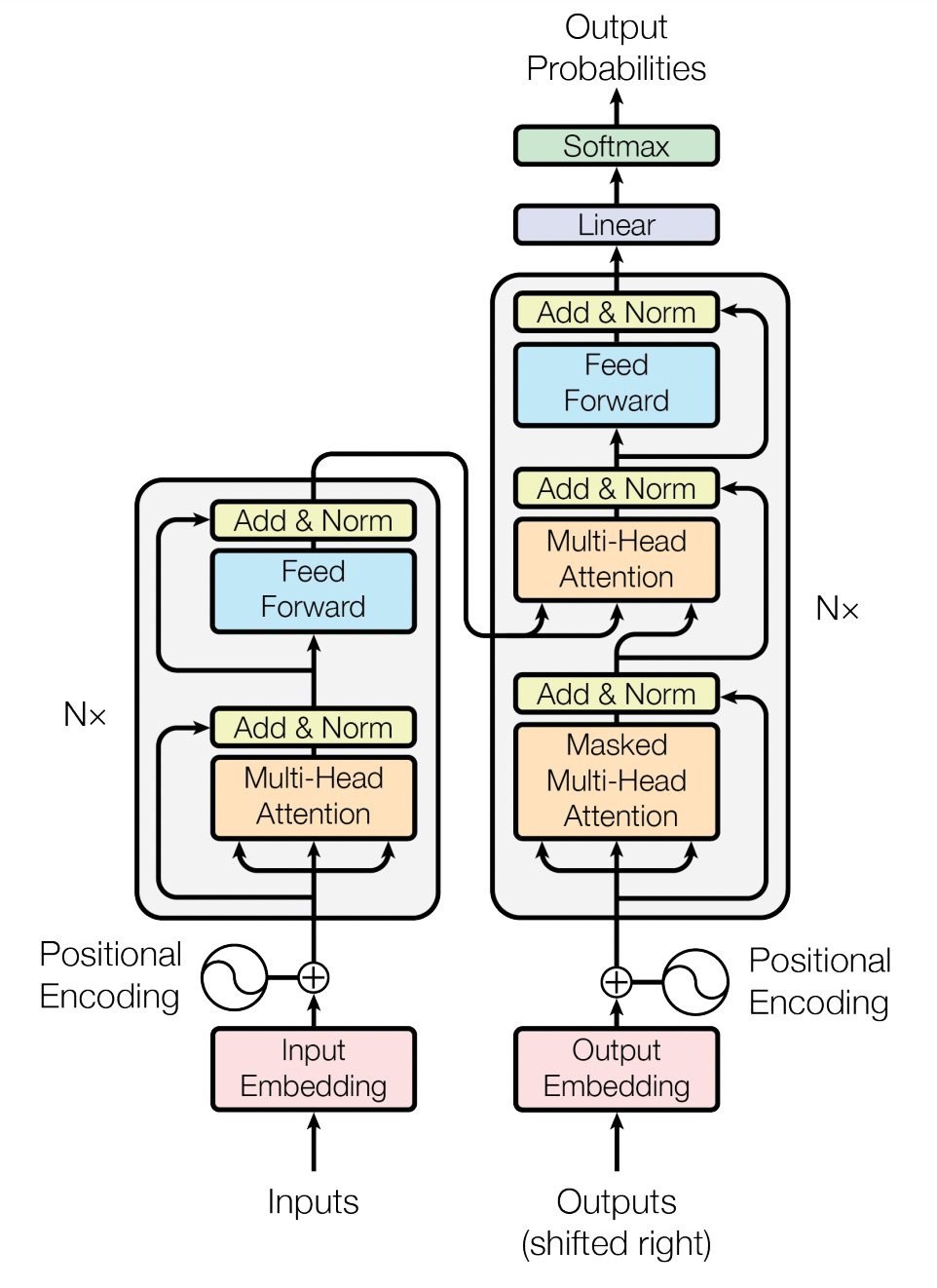

Transformer Architecture

The transformer architecture is similar to the RNN Seq2Seq architecture we discussed in the last post. It contains an encoder network, that takes a sequence of vectors (these generally represent text that has been tokenized and passed through an embedding layer). This is then followed by a decoder network that takes the encoded sequence to generate the output sequence (usually a probability score over the vocabulary). In this series, we will go through each of the layers in this architecture one by one and build them in PyTorch.

Tokenization, Embedding, and Positional Encoding Layers

Let us start with the Embedding and Positional Encoding layers of the model. These transform plain text into something that a neural network can understand, namely floating point vectors.

Models cannot take plain text as input, to resolve this issue, the text is converted into floating-point vectors on which the model can perform mathematical operations to transform the input into the desired output.

Tokenization

Converting a large block of text into a single vector can cause a lot of information loss. The typical way to do this is to split a chunk of text into smaller units that can be mapped to vectors independently. One way to do this is to split a chunk into words, this however creates a huge vocabulary of words (in the order of hundreds of thousands) that will each have to be mapped to a unique vector. A simpler way would be to map each character in the alphabet to a vector (which would be 26 vectors assuming English). This would however increase the number of tokens (units that make up the text) to be very large (100 characters on average in a sentence). The sweet spot is somewhere in between, where we have tokens that occur frequently enough to justify them being their own vectors, but also meaningful enough that we can condense enough information in each of them.

There are many different tokenization algorithms used in the literature, but for the purpose of this blog series, we will stick to the algorithm used in the “Attention is all you need” paper. The algorithm used is called Byte-Pair-Encoding [2]. I will not be covering the algorithm in this series, but I encourage readers to check out this blog on it.

The only thing that needs to be kept in mind is that this algorithm splits up the chunk of text into small units and then maps each unit to a unique integer (token).

So a sentence like “Today is a good day for a run” would then be encoded into a sequence of unique integers like [2323, 23234, 3465, 788, 45678, 63224, 92, 753, 3247, 8837]. We can then map each of these unique integers to a unique vector that represents the token. Tokenization has no layer of its own, since there are no trainable weights (although vocabulary is inferred from the data), and is, therefore, a part of the pre-processing module for our dataset. You may notice that tokenization is omitted from the transformer architecture image, nevertheless, it is an important concept and essential to all language models.

Embedding Layer

Once we have a sequence of integers (tokens), we need to map each of these integers to a unique floating-point vector. To do this we utilize something called an Embedding Layer. The embedding layer creates a bidirectional mapping between integers representing a unique token to a vector for the corresponding token. One question that needs to be answered is how we decide on the values of the vectors for each token. The solution to this is to learn the value of these vectors from the data itself. The vectors are randomly initialized at the start for each token, and then subsequently learned from the data during training time.

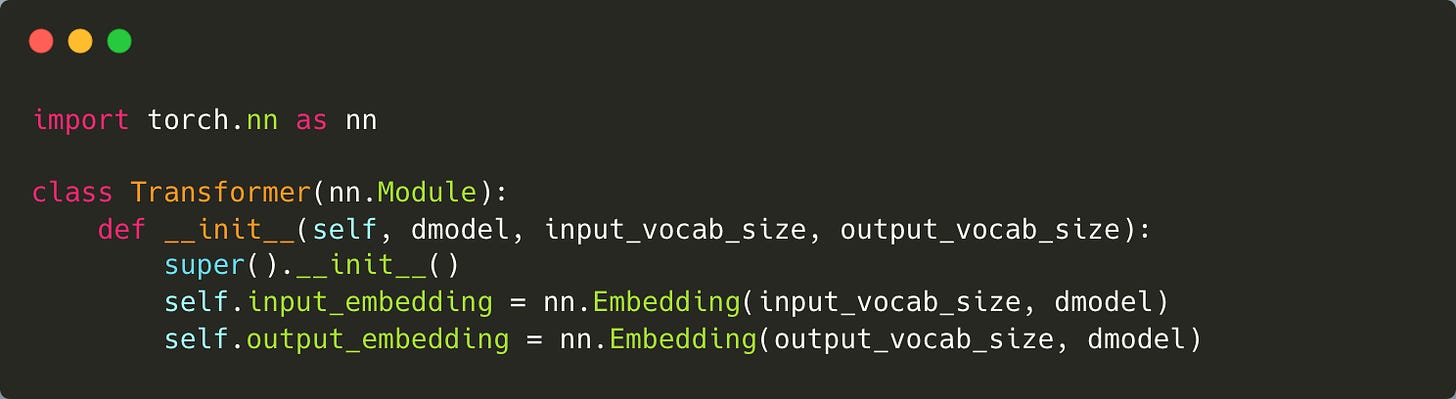

In PyTorch, this is done using an Embedding layer. Let us start by creating an outline for our Transformer model and initialize an Embedding layer.

NOTE: Code has been added as an image to improve readability, entire source code has been shared at the end.

Two important parameters are used here. The first, *_vocab_size, is the size of the vocabulary. This is usually provided by the tokenizer once it parses through our dataset and determines the number of unique tokens present in it. The second parameter is dmodel, this is the size of the vector that each token is mapped to. This is a hyperparameter that needs to be fixed. In the transformers paper, this is set to 512.

Notice that we have two separate embedding layers for input and output. This is because, in general for Seq2Seq tasks, the input sequence and output sequence belong to different languages and vocabularies. For tasks such as Question-Answering, these may be the same, as usually they belong to the same language and vocabulary.

Positional Encoding

The final part of the initial layers is the positional encoding layer. The need for this encoding layer will become evident when we review how the attention layers work, but for now, all we need to understand is that this adds information about the position of a vector in the sequence. This helps us differentiate between sentences like “My cat eats fish” and “My fish eats cat” which contain the same words, and probably the same tokens, but have entirely different meanings. It is therefore essential to have information about the positions of tokens passed to the model. In models like RNNs, this is inherently built-in, since each timestep depends on information from the previous timestep, in attention however, all tokens are processed parallelly, and hence this position information is essential.

Positional encodings/embeddings are added to the vectors outputted by the token Embedding layer. These are fixed encodings that are added based on the position of the vector in the sequence. In the original transformers paper, these encodings are sinusoidal vectors.

They are generated using the following formulae:

Where pos is the position of the token in the sequence, and i is the position in the vector dimension. The even dimensional positions contain a sine wave and the odd positions contain a cosine wave. The frequency of these waves varies based on the token position. These waves form a geometric progression from 2π to 10000 · 2π. It is hypothesized that this would allow the model to easily learn to attend by relative positions, since for any fixed offset k, PE(pos+k) can be represented as a linear function of PE(pos).

The authors also experimented with learned positional encodings but found that there was negligible improvement with those. They opted for a fixed embedding since it can be extrapolated for longer sequence lengths than those available in the dataset (although later research found that this was not scalable). Nevertheless, the sequence length (range of pos) is generally fixed during training time.

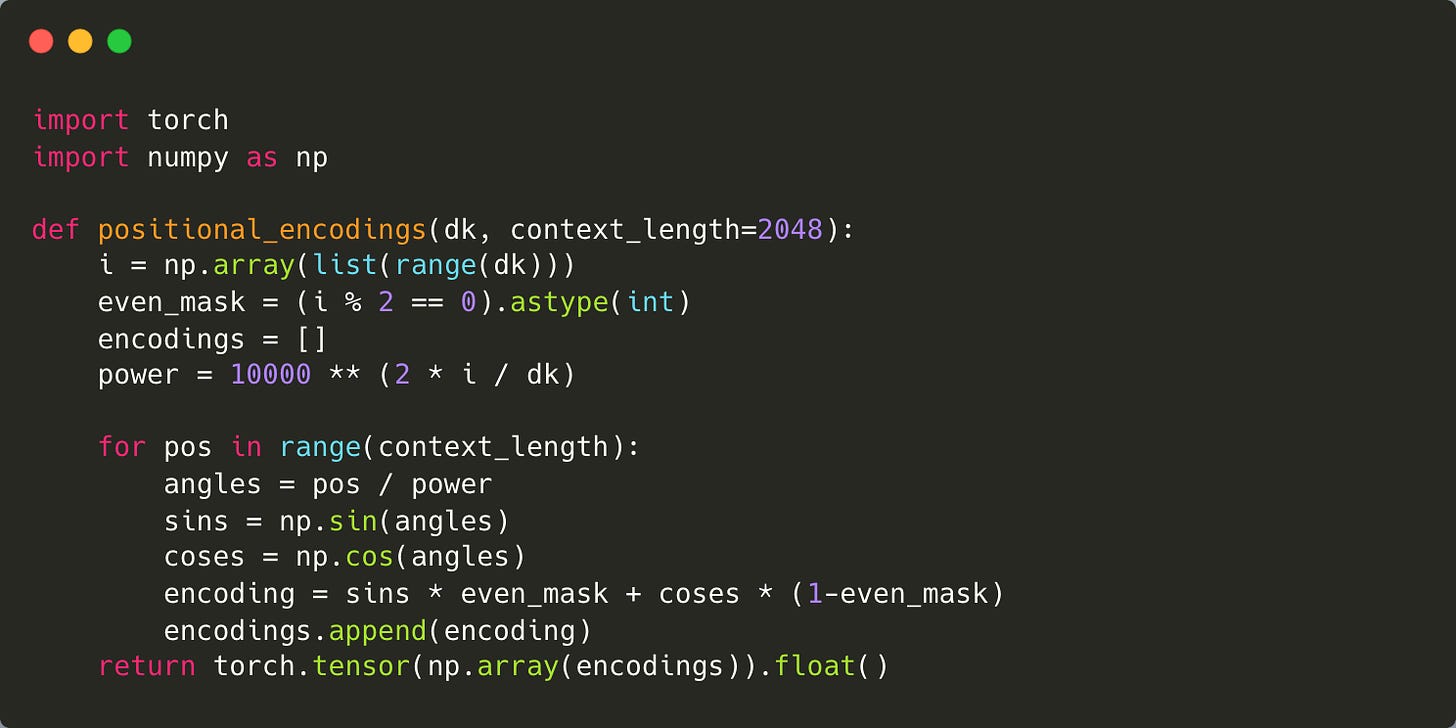

It is relatively easy to implement this in PyTorch:

NOTE: Code has been added as an image to improve readability, entire source code has been shared at the end.

The two parameters here are dk, which is the dimension of the vectors. This needs to be the same size as the dimension of the Embedding layer since these are added to the Embedding layer outputs. The second parameter is the context_length, which is the maximum range of the positions. These are generally fixed so that it is easier to generate all of these embeddings during initialization and use them later. We use a mask to combine the odd and even dimensional positions (sine and cosine) into a single vector. The embeddings are stacked into a 2D tensor of size (context_length, dk). Each row in this tensor represents a positional encoding for a particular token position. During training and inference, we sample the corresponding vector and add it to the embedding vector at that position.

Note that the entire transformer architecture, like RNNs, has no limit to the length of the sequence it can process. However, these positional embeddings act as a self-imposed limit, as the model does not perform well when these embeddings are extrapolated to ranges higher than what the model was trained with. People have come up with various solutions to this problem such as Rotary Position Embeddings [3], T5 bias [4], Attention with Linear Biases [5], and many more. Exploring research in this area is interesting, and I encourage readers to investigate further on their own.

Summary

With this, we have covered the key mechanisms by which plain text is transformed into inputs that a transformer model will understand.

To summarise:

Neural Networks work with numerical vectors and cannot understand plain text.

To overcome this, text is broken down into smaller unique units called tokens and each token is assigned a unique numerical value by a process called Tokenisation.

Each unique token is then assigned a vector using an Embedding layer. These vectors are learnable and their values are learned by the model as it trains on data.

To preserve positional information of these vectors, we add Positional Embeddings to the token embeddings. These embeddings are fixed sinusoidal waves, whose frequency varies based on the position.

REFERENCES

[1] Vaswani, Ashish, et al. "Attention is all you need." Advances in neural information processing systems 30 (2017).APA

[2] Britz, Denny, et al. "Massive exploration of neural machine translation architectures." arXiv preprint arXiv:1703.03906 (2017).APA

[3] Su, J., et al. "Enhanced transformer with rotary position embedding., 2021." DOI: https://doi. org/10.1016/j. neucom (2023).APA

[4] Raffel, Colin, et al. "Exploring the limits of transfer learning with a unified text-to-text transformer." Journal of machine learning research 21.140 (2020): 1-67.APA

[5] Press, Ofir, Noah A. Smith, and Mike Lewis. "Train short, test long: Attention with linear biases enables input length extrapolation." arXiv preprint arXiv:2108.12409 (2021).

SOURCE CODE:

The entire source code for this series can be found here: https://github.com/chrizandr/DL_Exp/blob/master/transformers/transformer.py